|

zhece.wang@mail.utoronto.ca I am currently working as an Algorithm Engineer (Machine Learning Engineer, MLE) at Baidu, focusing on large language models and intelligent agent systems. I have also received a Ph.D. offer in Electrical and Computer Engineering from The Edward S. Rogers Sr. Department of Electrical and Computer Engineering at the University of Toronto, which is currently deferred due to visa processing. Earlier, I earned my B.Eng (2021) in Electrical Engineering from Nankai University, and M.Eng (2025) in Computer Science from the Institute of Software, Chinese Academy of Sciences. My current research interests lie at the intersection of large language model reasoning, mathematical theorem proving, and AI systems, with both academic and industrial perspectives.

[New] I am open to academic collaborations and industry opportunities.

Please feel free to contact me if you would like to explore any potential cooperation.

|

|

|

|

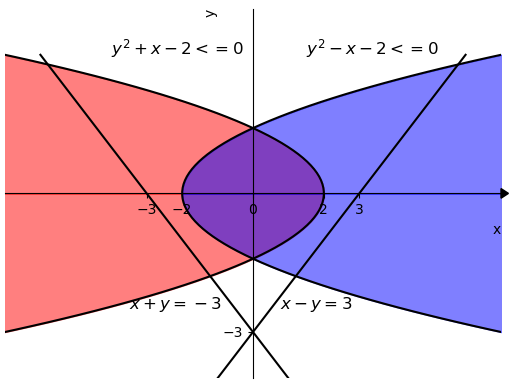

SMT SolvingComplete MethodIncomplete Method / Local Search |

|

(*): Equal contribution |

|

Zhonghan Wang

[ASE 2025]

The 40th IEEE/ACM International Conference on Automated Software Engineering

arXiv | code | paper | poster | slides | video | |

|

Zhonghan Wang, Bohua Zhan, Bohan Li, Shaowei Cai

[VMCAI 2024]

The 25th International Conference on Verification, Model Checking, and Abstract Interpretation

arXiv | code | paper | slides | video | |

|

|

| Oct 2022 - Aug 2023 |

Alibaba Group – Alimama Advertising Technology Department, Beijing, China Algorithm Intern Worked on constrained optimization for large-scale ad allocation using local search–based methods. Modeled allocation as a constrained optimization problem with hard constraints (e.g., budget, feasibility) and soft constraints (e.g., CTR, CVR, competition coefficients). Designed efficient search strategies and objective formulations to balance multiple business metrics and improve overall system performance. |

| Nov 2025 - Present |

Ant Group – Ant Digital, Hangzhou, China Algorithm Intern Worked on supervised fine-tuning (SFT) and reinforcement learning for large-scale prover models. Designed fine-grained, rubric-based reward signals (e.g., correctness, step validity, reasoning strategy) to improve RLHF stability and performance. Built a distributed RL training pipeline across multiple machines (rollout and training nodes). Developed data pipelines for Lean-based formal proofs, enabling scalable training and improving model performance on formal reasoning tasks. |

|

|

| Artifact Evaluation Committee | VMCAI 2025 |

|

|

| Gold medals of Z3++ in SMT-COMP QF_NRA Single Query (main developer) (2022, 2023) |

| Globalink Research Internship offer (Alberta University, Canada) (2020) |

| Outstanding Prize for Tianjin College Student Mathematical Competition (Ranked 17) (2018) |

|

|

| London 2024 (POPL) | Google Photos |

| Seoul 2025 (ASE) | Google Photos |

|

|

|

|